selective amnesia

Selective amnesia: “Amnesia about particular events that is very convenient for the person who cannot remember”. It is a phenomenon in mammals, where our brains select which distracting memories are deemed inept and thus, forgotten. We can mimic this by teaching an AI to ‘fix’ data that it bases possible biases on. As for the datasets, this means deleting bias-creating incidents, and rearranging all remaining data.

Every single person has biases. Emotional inclinations or preconceived opinions towards something or someone. I believe that most humans assume that a possible AI is cold, and brutally logical, but in fact it’s possible for an AI to have biases as well. This is a very humane phenomenon, and it’s fascinating that AIs reflect this same cognitive behavior. In a way, it’s beautiful to see these unimaginably intricate systems sharing eclipsed similarities with us. Still, as we utilize them in complex decision making, we want their findings to be unequivocally precise. There is no room for false logic on the AIs half, there’s too much of that in our human logic to begin with.

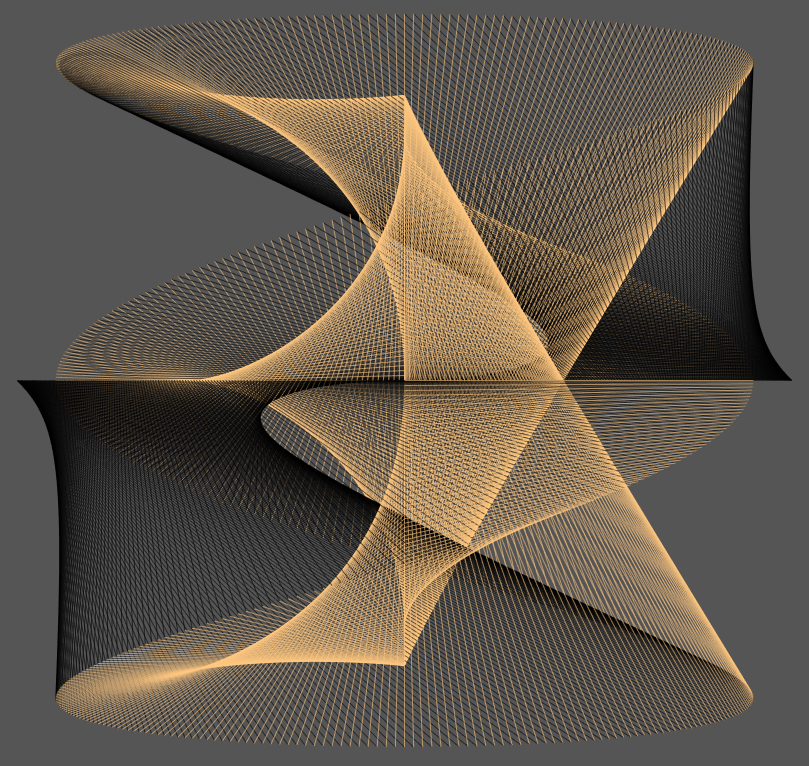

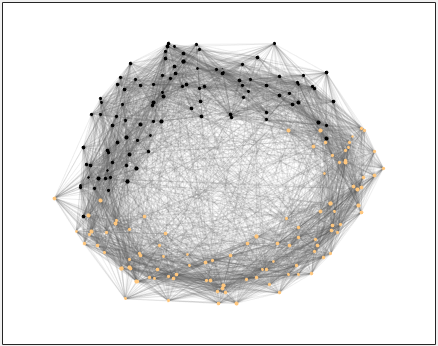

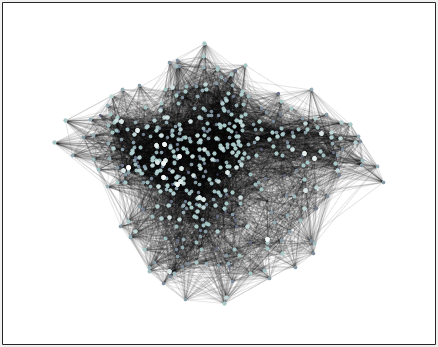

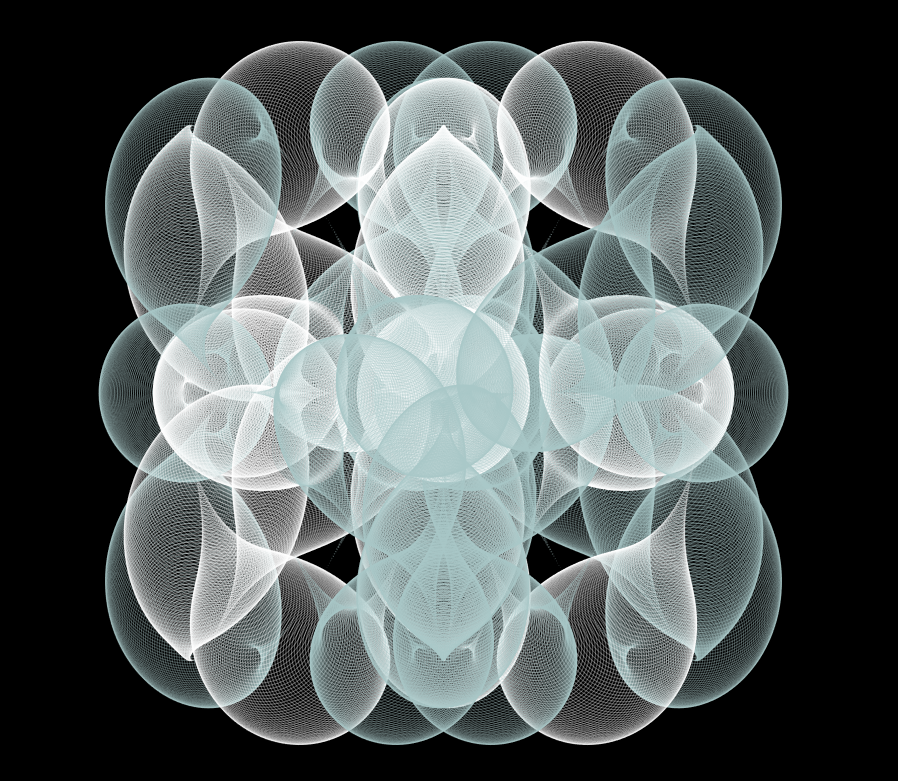

So, selective amnesia. By itself, this procedure is a pure form of art, recognizing shared AI and human qualities. This ‘lapsed’ data can be reconstructed from network graphs into visual art as well. Reprised in an alternative method that doesn’t display binary characteristics, only substantiating its existence.